Yuhan Hu and Michael Suguitan, Ph.D. students in Professor Hoffman's research group, Human-Robot Collaboration & Companionship Lab, recently both won an award at the 14th ACM/IEEE International Conference on Human-Robot Interaction (HRI) that was held in Daegu, Korea. The conference brings together researchers from around the world and seeks to showcase the very best research in human-robot interaction with roots in robotics, psychology, cognitive science, HCI, human factors, artificial intelligence, organizational behavior, anthropology, and many other fields.

Yuhan Hu

Award: Best Full Paper

Paper Title: Using Skin Texture Change to Design Emotion Expression in Social Robots

- Abstract of paper: We evaluate the emotional expression capacity of skin texture change, a new design modality for social robots. In

contrast to the majority of robots that use gestures and facial movements to express internal states, we developed an emotionally

expressive robot that communicates using dynamically changing skin textures. The robot’s shell is covered in actuated goosebumps and spikes, with programmable frequency and amplitude patterns. In a controlled study (n = 139) we presented eight texture patterns to participants in three interaction modes:

online video viewing, in person observation, and touching the texture. For most of the explored texture patterns, participants consistently perceived them as expressing specific emotions, with similar distributions across all three modes. This indicates that a texture changing skin can be a useful new tool for robot designers. Based on the specific texture-to-emotion mappings, we provide actionable design implications, recommending using the shape of a texture to communicate emotional valence, and the frequency of texture movement to convey emotional arousal. Given that participants were most sensitive to valence when touching the texture, and were also most confident in their ratings using that mode, we conclude that touch is a promising design channel for

human-robot communication.

Index Terms—Soft robotics; human-robot interaction; emotion expression; empirical study; texture-change; nonverbal behavior

Yuhan's project has been featured online.

- IEEE Spectrum: Feel What this Robot Feels Through Tactile Expressions - Inflatable spikes and goosebumps help this robot communicate.

- Cornell Chronicle: Robot prototype will let you feel how it’s ‘feeling’

- HRC2 (Human-Robot Collaboration & Companionship Lab): Goosebumps - Texture-Changing Robot Skin

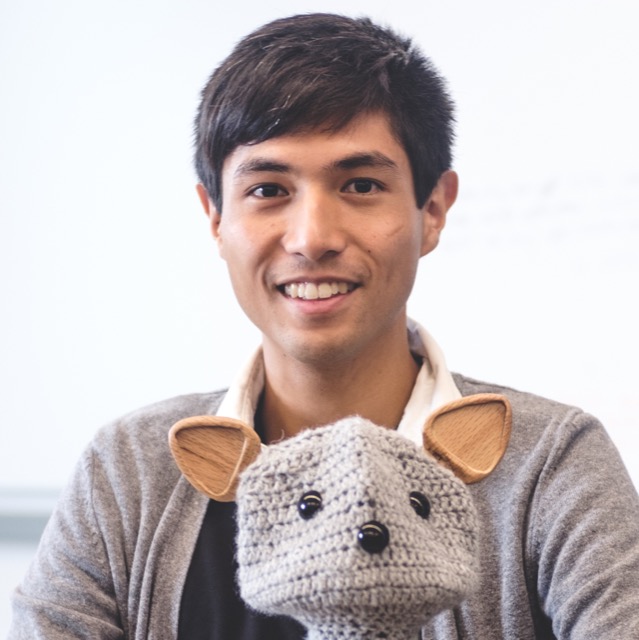

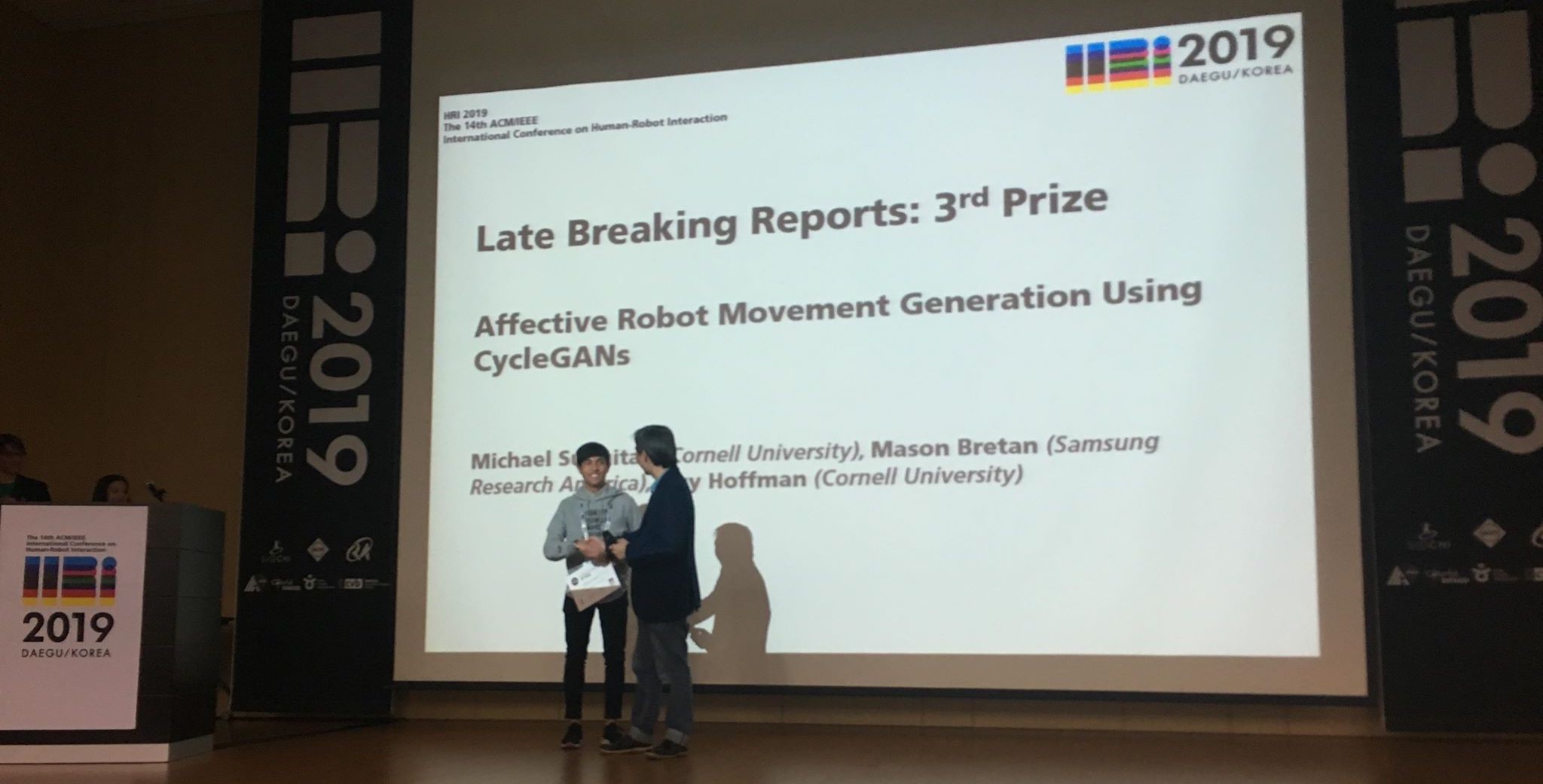

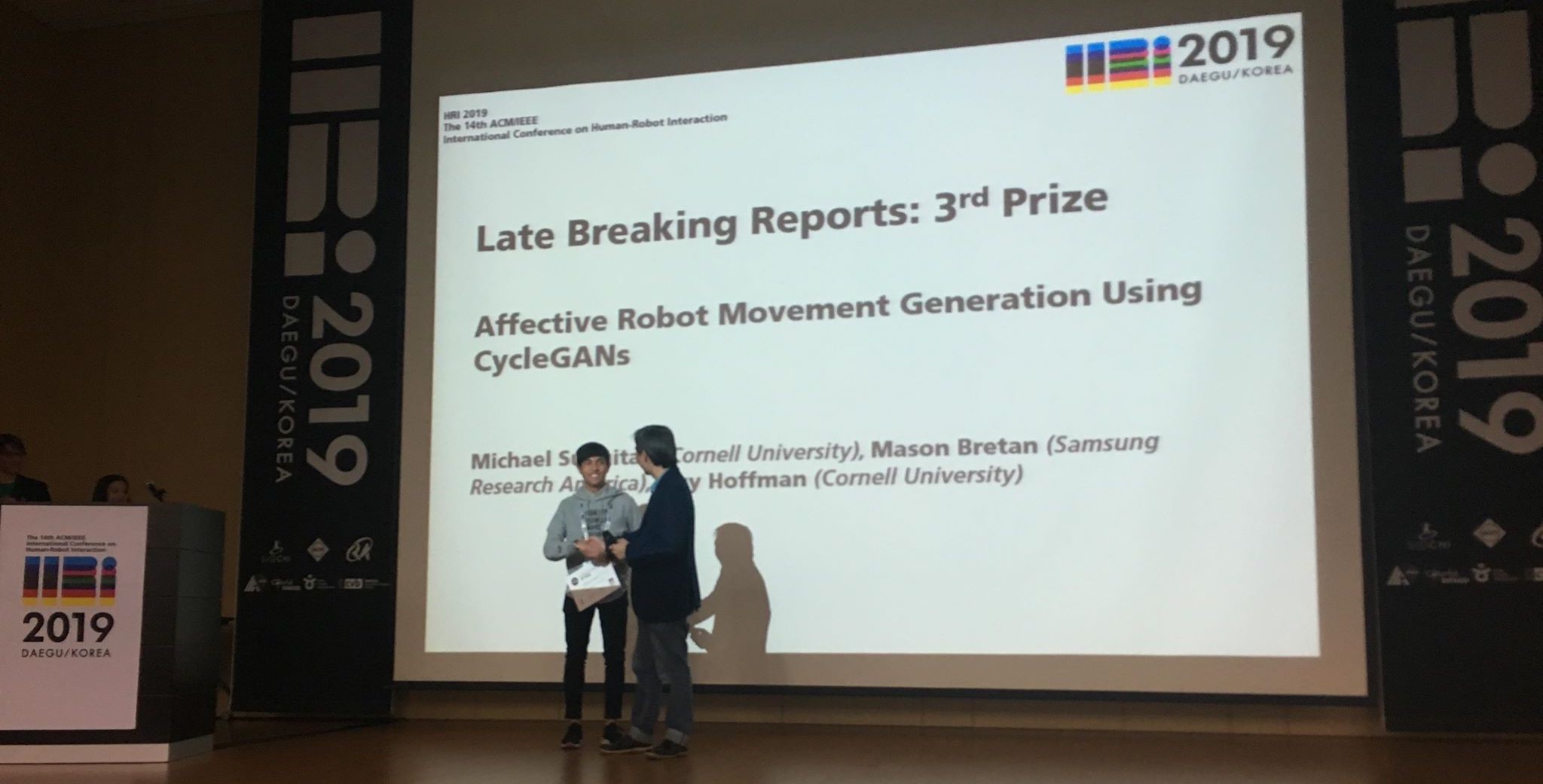

Michael Suguitan

Award: Late Breaking Reports Award - 3rd Prize

Paper Title: Affective Robot Movement Generation Using CycleGANs